GSA SER Verified Lists Vs Scraping

Understanding the Foundation of Link Building with GSA SER

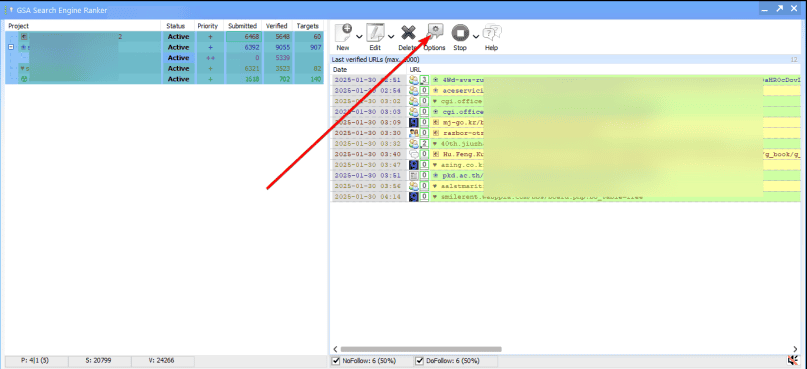

Anyone who runs GSA Search Engine Ranker eventually faces a critical supply-chain decision: where do your target URLs come from? The engine is only as powerful as the lists you feed it, and two dominant schools of thought have emerged. Some users swear read more by imported verified lists, while others insist that real-time scraping is the only way to stay ahead. This divide sits at the heart of the GSA SER verified lists vs scraping debate, and understanding the mechanics, costs, and hidden pitfalls of each method is essential before you commit your project to one path.

What Exactly Are GSA SER Verified Lists?

Verified lists are pre-compiled bundles of URLs that have been tested and confirmed to accept submissions. They come from marketplaces, private forums, or dedicated vendors who run their own instances of GSA SER day and night, checking millions of potential targets and filtering out the ones that actually work. A typical verified list might contain anything from a few thousand to several hundred thousand URLs, sorted by platform type, PageRank, or domain authority. These lists are often sold with daily updates, so you are essentially buying the output of someone else’s verification grind.

The immediate appeal is speed. You download a file, import it into GSA SER, and the software can start firing submissions within minutes. There is no waiting period for proxies to crawl the web, no CPU cycles burned on footprint searches, and no wasted attempts on dead engines or sites that have tightened their moderation. For marketers who value time over raw freshness, this plug-and-play model can feel like a cheat code.

The Raw Alternative: Live Scraping Unpacked

Scraping, in the context of GSA SER, means letting the software hunt for targets on its own using search engines like Google, Bing, or custom footprints. You configure a set of search queries, define the platforms you want, and unleash the scraper to build its own target database from scratch. Every URL it discovers is new to your project, untouched by the thousands of other users who might have bought the same verified list.

Scraping is not a one-click solution. It demands a solid proxy setup, careful footprint management, and patience. A fresh scrape can take hours or even days to produce a substantial list, and during that time you are burning resources on CAPTCHA solving, bandwidth, and the constant risk of search engine blocks. Still, there is a philosophical beauty in the process: you are generating completely unique targets that have never appeared in a mass-distributed pack, which in theory shaves off the footprint left by everyone else using identical URLs.

GSA SER Verified Lists vs Scraping: A Side-by-Side Breakdown

To move beyond surface-level preferences, it helps to split the comparison into concrete factors that affect performance, cost, and long-term success. The keyword here isn't just a buzzword; it represents a genuine operational fork that every serious user must navigate.

1. Uniqueness and Footprint Reduction

The most cited advantage of scraping is uniqueness. If you scrape your own targets, you are likely the only person hitting those exact URLs with that exact anchor text profile. Verified lists, by contrast, are accessed by dozens or hundreds of buyers simultaneously. Search engines can detect patterns when the same obscure Turkish blog comment page suddenly gets a wave of spammy submissions from different referring domains. Shared lists accelerate this pattern recognition, potentially devaluing the links before you even see a ranking bump.

However, uniqueness is not an absolute shield. Even scraped lists often converge on popular platforms that every GSA SER user eventually finds. The real edge lies in the long tail—those forgotten directories and niche forums that only a deep, constantly evolving scrape can uncover. Verified list vendors rarely chase these targets because they don't scale commercially; it is more profitable to sell a huge volume of easy-to-verify platforms. If you want to disappear into the noise of the web, scraping gives you access to corners that pre-made lists ignore.

2. Time Investment and Speed of Deployment

Verified lists win the sprint race every time. Suppose you need to launch a campaign today for a time-sensitive offer. Importing a fresh verified list and letting GSA SER loose immediately can generate hundreds of live links within hours. Scraping, on the other hand, has a cold start problem. You first need to seed the search engine queries, wait for the scraper to collect candidates, and then run the verification phase that the list vendor already did for you. This sequential process can delay meaningful link acquisition by a day or more.

Long-term, the time equation shifts. Maintaining a scraping infrastructure—rotating proxies, refreshing footprints when search engines change their layout, and dealing with IP bans—becomes a recurring maintenance task. A verified list subscription outsources that overhead. You trade a monthly fee for the hours you would have spent nursing a scraping server back to life after a proxy pool collapse. For solo operators balancing multiple projects, that trade-off often makes sense.

3. Success Rate and Waste Reduction

A well-curated verified list boasts an enviable verification rate, often above 80%. That means out of every hundred targets you attempt, eighty will result in a successful submission. Freshly scraped and unverified URLs, by contrast, might start with a success rate as low as five percent. The platform discovers a huge haystack of potential targets, but most turn out to be broken, already spammed to death, or locked behind login walls that GSA SER cannot bypass. The resulting churn wastes proxies, CAPTCHA credits, and time that could have been spent productively.

Proponents of scraping argue that this waste is an investment. Each failed attempt teaches the engine which footprints are dead, progressively tightening the scrape parameters. Over weeks, the self-built target database becomes extremely potent and tailored to your exact niche. But the initial waste is undeniable, and for projects with tight budgets for CAPTCHA solvers or limited VPS resources, a high-failure-rate beginning can be financially painful.

4. Platform Freshness and Algorithmic Avoidance

Search engines and platform owners constantly update their code. A verified list from last week might be full of targets that have since patched the vulnerability GSA SER exploits, changed their comment form, or simply shut down. Quality list vendors update frequently, but there is always a lag. Scraping operates in real time, so it naturally filters out platforms that have disappeared overnight. If a major engine tweak kills a particular type of forum, a live scrape will simply stop finding those URLs, whereas a verified list might keep feeding them to your project until the next update.

This freshness ties directly into the GSA SER verified lists vs scraping resilience argument. When a big spam purge happens, the users who rely entirely on static lists often see their link velocity plummet while they wait for a vendor to patch the gaps. Scrapers, provided they adjust their footprints, can pivot on the same day. The ability to reroute your target acquisition in a crisis is one of the strongest arguments for building an independent scraping pipeline.

5. Cost Analysis: Capex vs Opex

Verified lists appear cheap at first glance. A monthly subscription might cost the same as one or two pizza dinners, giving you a firehose of ready-to-use URLs. Scraping, however, forces you to pay for robust proxies, multiple search engine API keys or custom scraping tools, and significantly higher CAPTCHA solving fees because of the massive verification volume. The upfront and ongoing costs of a serious scraping setup can easily dwarf the price of the best verified list service.

Yet cost must be weighed against return. If scraped links prove more durable and pass more ranking power because of their uniqueness, the higher expense might be justified. A link that sticks for a year is worth more than ten links that get deindexed in a month. The calculation is not just about dollars spent, but about the cost per surviving link. Marketers who track link longevity often discover that a blended approach—using a verified list for bulk foundation links and scraping for high-value, unique targets—optimizes the budget better than either extreme.

Blending the Two Worlds for Maximum Efficiency

Rarely is the choice binary. Most advanced GSA SER operators eventually develop a hybrid model that leverages the strengths of both methods while insulating against their weaknesses. You might use a daily verified list to keep a steady drip of mid-tier links flowing without constant supervision, while allocating a dedicated scraping rig to hunt for obscure platforms that nobody else is touching. This allows you to spend the expensive proxy and CAPTCHA resources only on the most promising scraping avenue, rather than burning them on generic targets that a $30 list already covers.

Another smart layer is to use scraping to validate and extend a verified list. Import the purchased targets, let GSA SER run its normal process, but configure the software to scrape for related pages or sister sites from the successful verified targets. You essentially use the list as a breeding ground for an ever-expanding, semi-unique network. This sidesteps the initial cold-start failure rate of pure scraping while injecting a dose of freshness that mass-distributed lists lack.

Pitfalls That Hit Both Sides Equally

Regardless of whether your targets come from a vendor or your own scraping server, some universal traps remain. Low-quality content, over-optimized anchor text, and ignoring platform relevance will tank a campaign built on the most exclusive target database in the world. Verified lists and scraped URLs both end up in the same link graph, and Google’s algorithms judge the link, not its origin story. If you are blasting spun gibberish onto irrelevant blogs, even a custom-scraped list of completely undiscovered URLs will eventually earn a penalty.

Domain quality filtering is another shared responsibility. A verified list might contain high metric scores but still include spam-riddled domains that get penalized later. A scraper can be configured to avoid known toxic patterns, but it may also accidentally pull in entire networks of PBN footprints if you’re not careful. The tool only does what you tell it. Neither method replaces the need for a smart linking strategy, tiered structures, and continuous monitoring of where your links actually land.

Making the Call for Your Specific Project

When the GSA SER verified lists vs scraping question comes up in communities, the answer almost always depends on scale and tolerance for risk. If you are managing a handful of low-competition affiliate sites and want to set and forget as much as possible, a high-quality verified list from a reputable vendor will likely meet your needs without the headache of scraping infrastructure. You can pour your time into content and on-page SEO while the list does the heavy lifting.

If you operate in aggressive, penalty-prone niches or need to protect a flagship money site at all costs, scraping for unique targets becomes a logical investment. The control you gain over target selection and the reduced footprint from not sharing a list with hundreds of other users can make the difference between a long-term ranking asset and a ticking time bomb. In these scenarios, the extra expense and time commitment are an insurance policy, not an indulgence.

Ultimately, the most successful GSA SER users never stop testing. They track which imported lists produce the lowest removal rates, they experiment with different scraping engines and proxy configurations, and they constantly read the signals their backlink profile sends. The tool is a canvas, and whether you paint with verified colors or scrape your own pigments, the final picture depends on the artist’s hand, not the origin of the paint.